Concepts and Terminologies

This page provides explanations and links to concepts and terminologies used in the AnySee to help users better understand the service.

Entities

An entity is a data type containing a structured vector data field as an entity indicator.

Entities might have different endpoints and data structures.

As of August 2022, AnySee offers the following entity types.

Models

A model is an enumerated string to specify an AI computer vision model to process the uploaded Image. On the other hand, it is a composition of a few modules, each dealing with a specific function during image processing. The process starts with the detection module and should always go through the alignment, the blueness, and the head pose modules to get the halfway outputs. With these outputs, face features and attributes can be extracted. Quality, which uses the values from alignment and blurriness to calculate its results, is not exactly a part of the model.

The processing order of a face model

All models on AnySee are pre-trained static ones, which do not perform re-training. Users should specify the model when calling an API to upload an image.

In most cases, one model can handle only one entity type. Specifying the wrong model will usually lead to an error. But even if it succeeds, its accuracy cannot be guaranteed.

Vectors generated by different models are incompatible, even if they belong to the same entity type. Since AnySee does not store any images, switching to another model would require re-input of all original images. Please keep the image data on your service if you plan to upgrade the model in the future.

Each model has different requirements for the input image. Refer to the detail page of the image under each entity.

As of June 2023, AnySee offers the following models.

- JCV_FACE_K25000

- JCV_FACE_J10000

Vectors

A vector (or a feature vector) is a multi-dimension quantitative data set. In the process of image recognition, a model will process the input image and output a feature vector.

The image of vector generation

The feature vector extracted, in the form of a multi-dimensional float vector, becomes an abstract indicator of the entity included in the image. Applying a specific similarity function (commonly Cosine similarity and Euclidean distance) can quantify the similarity between entities.

Search

In some use cases, users want to find the most similar entity among a pre-created group of entities. Calculating the similarity scores of all possible pairs will achieve that purpose but is too resource-consuming. In most practices, a nearest-neighbor search algorithm is adopted to reduce searching time.

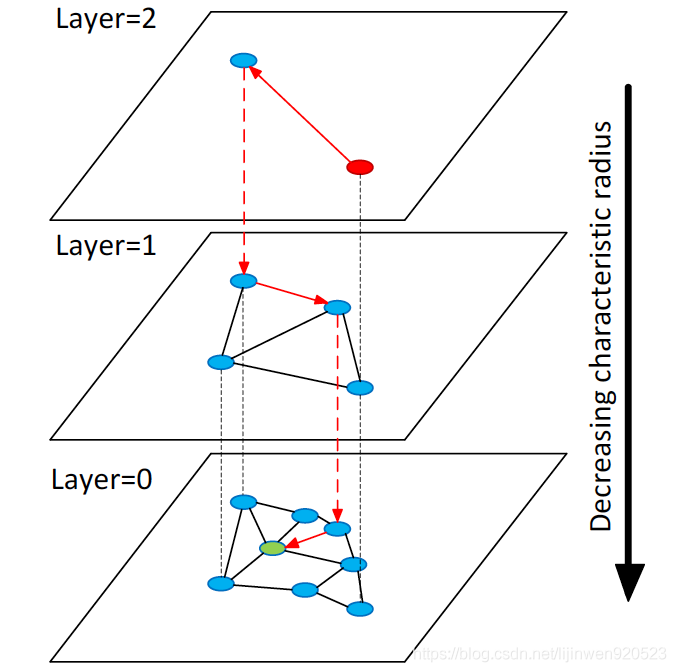

Since the algorithm does not go through all possible pairs, an appropriate algorithm needs to balance the recall rate and the performance. AnySee uses the latest modified variation of HNSW (Hierarchical Navigable Small World) to achieve a search time among 1 million vectors within 25 milliseconds and a recall rate close to 100%.

Illustration of the Hierarchical NSW idea

Quoted from Malkov, Yury A., and Dmitry A. Yashunin. "Efficient and robust approximate nearest neighbor search using hierarchical navigable small world graphs." IEEE transactions on pattern analysis and machine intelligence (2018).

For more details on nearest-neighbor search algorithms, refer to Ann-Benchmarks.

Indexing

Like other database services, AnySee uses indexing to facilitate rapid and precise data searching. Most of the fixed fields are indexed, while other customizable fields are not indexed. This configuration allows various flexible and high-performance search options, like pre-filtering in search and list entities. All fields are labeled with their indexing conditions in this document.

Some VSS (Vector Search & Storage Engine) cannot real-time update or delete vectors without a re-indexing process, which needs to stop the service for a while. But AnySee provides real-time operations of vectors without any stop, eliminating the possibility of returning already deleted data in a search.

Updated 11 months ago